DecoDame

One of the Regulars

- Messages

- 215

Hello, all.

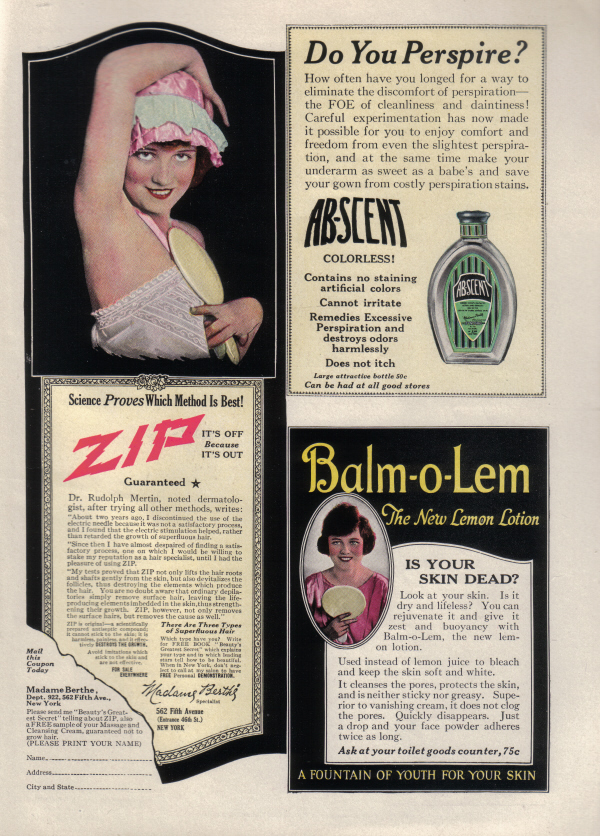

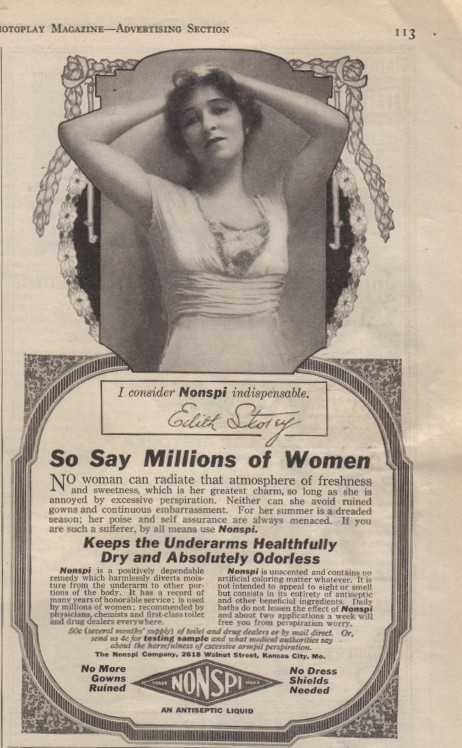

This might seem like an odd, out-of-left-field question, but i was wondering when women shaving their underarms became the common majority in the United States?

I've been catching up with "Boardwalk Empire" (love it!) and being an HBO show and having scenes in an up-scale dress shop, you see alot of bare arms along with the bare bodies. And it struck me that all the actresses are shaved, and I wondered if that was actually period/historically accurate for 1920.

Was it the sleeveless shift dress fashion of the 20's itself that shifted underarm shaving to the norm here?

This might seem like an odd, out-of-left-field question, but i was wondering when women shaving their underarms became the common majority in the United States?

I've been catching up with "Boardwalk Empire" (love it!) and being an HBO show and having scenes in an up-scale dress shop, you see alot of bare arms along with the bare bodies. And it struck me that all the actresses are shaved, and I wondered if that was actually period/historically accurate for 1920.

Was it the sleeveless shift dress fashion of the 20's itself that shifted underarm shaving to the norm here?